Learn about the benefits of a network built with white box (whitebox) – or branded white box – switches:

- Simplicity

- Automation

- Lower cost

- Improved performance

- End of chassis switches and switch stacks

- No vendor lock-in

A long overdue change for the better in enterprise networks

Using white box (whitebox) switches to build enterprise networks represents a dramatic change from the way we’ve built networks for decades: that is, relying on complex, expensive, largely proprietary hardware and software from a handful of vendors. White box (and branded brite box) switches and networks offer a viable alternative, with open platforms that simplify network operations and improve performance – while dramatically lowering costs.

Download our latest White Paper:

An Open Approach to Intent-Based Networking

![]()

White box switch hardware

Exactly the same as the brand names, from the same manufacturers –

without the hefty price

![]()

White box switches and networking in practice

Handle the edge traffic onslaught, improve performance, add SDN

for security and control

![]()

Simplified operations & automation

AmpCon™: the first automation software suite for enterprise white box

switches and networks

White box switch hardware

White box switches share three basic attributes: they are built on commodity hardware, use chipsets (ASICs) from established vendors, and run your choice of NOS. Brite box switches are similar, but carry a specific brand, such as Dell EMC; still, the underlying concept is the same.

At the hardware level, white box switches are based on commodity, “bare metal” hardware from manufacturers such as Accton, Delta Networks, Foxconn and Quanta Cloud Technology. These same players supply hardware to major networking industry vendors such as Cisco, Juniper and Arista.

The networking chipsets the hardware is based on likewise come from large, established vendors including Broadcom, Intel, Marvell and Mellanox. Chip vendors supply an application programming interface (API), which is used by the NOS to control the ASIC. They also usually include a software development kit (SDK) to program the ASIC, such as to set up a VLAN or ACL entries.

White box networking equipment has significantly evolved over the last few years along with the overall switching market. Whereas the switches initially were limited in terms of speed and capacity, today white box switch vendors deliver models in an array of sizes and options that can meet even the most demanding enterprise switch requirements, including:

- 1G switch with 48 1G ports (or Power-over-Ethernet ports) and 4 10G ports

- 5G/10G 48-port PoE/MultiGig switches (for wireless network upgrades) with 4 25G plus 2 100G uplinks

- 10G switch with 48 10G ports and 6 40G ports

- 25G switch with 48 25G ports

- 40G switch with 32 40G ports

- 100G switch with 32 100G ports

Essentially, white box switch hardware is the same standard hardware used by traditional networking vendors such as Cisco and Juniper, with one big difference: the network operating system that runs on top (well, that and the price).

Open network operating systems

Again, the main difference between white box switches and those from legacy vendors is the NOS. Legacy switch vendors put their own NOS on top of commodity switch hardware, creating a proprietary switch. By contrast, white boxes run whatever NOS the customer chooses. The major vendors also charge a premium for their switches, often twice as much as a comparable white box switch.

Most white box switches employ an “open” Linux-based NOS that is intended to be disaggregated, or abstracted, from the underlying network hardware – similar to how virtual servers disaggregate the server OS from the underlying server hardware. Hardware-software disaggregation means the user is free to swap out either the hardware or the NOS at will; they are not tied to one another as with legacy switches from major vendors.

That means as newer white box switch hardware is developed (or newer software), customers can swap out their existing platform for a new one – including a platform from a different vendor. Essentially, customers are free to upgrade their hardware and software whenever it makes sense from a price/performance perspective.

White box/brite box networking also creates the potential for an open-standards-based portable NOS that can run on a wide variety of switches from multiple vendors, compared to the previous traditional architecture of a single legacy networking equipment vendor.

The Open Compute Networking Project, for example, is a set of disaggregated, open network technologies, including a Linux-based NOS and developer tools managed under the auspices of the Open Compute Project (OCP).

The Open Network Install Environment (ONIE), an open source initiative driven by vendors to define an open “install environment” for white box switches, is also a project of the OCP. ONIE seeks to enable NOSs that are portable from one white box switch to another. The goal is to achieve a higher level of flexibility in the networking industry.

White box networking can also support software-defined networking (SDN) because the switches are by nature highly programmable. Here a central SDN controller can be used to send routing and control instructions to a series of white box switches installed throughout the network.

Benefits of white box networking in practice

Open, white box networking can bring a number of significant benefits to enterprise networks, including campus networks and remote office sites at the access edge.

For one, it’s a cost-effective way to deal with the onslaught of traffic that companies are now seeing in their campus networks and at the access edge. This is increasingly the result of Internet of Things (IoT) applications connecting hundreds or thousands of devices and sensors to the network edge, as well as the bring-your-own-device trend, with each user connecting multiple devices to the network. Many of them are capable of producing or consuming huge amounts of data, such as from streaming video. Significant use of cloud services is yet another driver, with traffic entering the network at the edge before it gets to the cloud.

In a traditional enterprise network, companies would deal with this increased traffic load by upgrading their edge switches or switch chassis – an expensive proposition when buying from the traditional vendors. Open white box switches, on the other hand, are far more cost-effective because they come without the markups that the major vendors apply.

The open white box approach can also facilitate the deployment of a more streamlined leaf-spine network topology rather than the traditional three-tier model, with its static access, aggregation and core layers. With the leaf-spine model, enterprises can collapse the access and aggregation layers into one. In many cases, the network can then be configured such that there’s only a single “hop” between any two devices, providing more streamlined connections and improved performance as compared to the three-tier model.

As implemented by vendors including Pica8, the leaf-spine architecture also improves performance by implementing Multi-Chassis Link Aggregation (MLAG) technology as a replacement for the Spanning Tree Protocol (STP). While STP supports two paths between any two network points, only one of them can be active at any given time. MLAG allows both links to be active, which effectively doubles the amount of available network bandwidth. MLAG also supports a much faster convergence time after it needs to make network adjustments, such as to route around failed links.

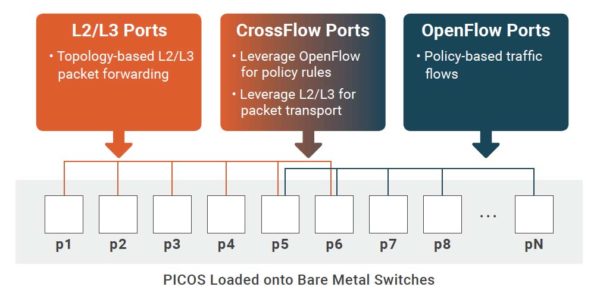

Some white box networking vendors support the use of SDNs alongside traditional Layer 2/Layer 3 routing protocols (see graphic, below). Pica8’s unique Crossflow technology takes this a step further by allowing every white box switch port in a network to support L2/L3 traffic as well as SDN traffic at the same time. That can be used to send security updates to the switch, for example, without interrupting normal traffic flows. Similarly, the capability can be used to conduct deep security monitoring on switch ports, again without interrupting traffic.

Automation and simplified operations

With the size of networks continuing to expand, the implementation of network automation has become a core feature in the enterprise. In a first for open white box networking, Pica8 now offers AmpCon™, a complete automation framework for deploying and managing enterprise networks.

AmpCon slashes the operational overhead required to handle ongoing configuration management and upgrades as well as policy and security changes. Pica8’s cost-effective framework not only reduces OpEx/CapEx costs, it also allows the integration of any number of Pica8-supported, multi-vendor 1G-to-100G open white box/brite box switches.

Pica8’s AmpCon automation framework automates tasks including network switch provisioning, configuration, licensing and other ongoing management functions. It includes:

Quick-start GUI

Activation and configuration of hundreds of remote switches is so simple it can be performed by entry-level IT personnel (no coding experience required).

Centralized CLI

Enables network portability by providing a centralized standard command line interface for advanced orchestration services and/or network services.

Auto/ZTP provisioning solution

Provides auto-provisioning and configuration of an entire network during deployment as well as providing network-wide visibility.

Aggregated management

Aggregates Syslog and SNMP management to view and filter on various parameters for troubleshooting and monitoring purposes.

Redundant network connectivity

Provides improved performance and high-availability redundancy by using Multi-Chassis Link Aggregation (MLAG) to address insufficient uplink bandwidth issues.

Robust security, backwards-compatible NAC

Unlike the data center, the access edge is where users enter your network, so you also need robust security solutions, including network access control (NAC) and virtual private networks (VPNs), along with wireless networks.

PICOS interoperates with NAC solutions from major vendors, including Cisco Identity Services Engine (ISE), Aruba ClearPass, the open source PacketFence project, and access points (APs) from any wireless network vendor.

Opting for an open network from Pica8 doesn’t mean you have to swap out everything at once (although that’s certainly an option). Pica8’s PICOS is backward compatible with existing legacy networks and easily integrates with switches from Cisco, Juniper, Arista, HPE, Aruba, and others. Upgrade gradually, building by building, floor by floor, or however you best see fit.

Intrigued? Learn more about the Benefits of White Box Networking

- Simplicity

- Ease of Use

- Lower Cost

- No Vendor Lock-in